There’s been no shortage of bold claims about AI in the legal world. Major platforms now promise faster research, better answers, and—perhaps most ambitiously—tools that are effectively “hallucination-free.” A recent Stanford study gives a more nuanced picture. What follows isn’t original research, but a guided walkthrough of that paper—what it set out to test, what it found, and why its conclusions matter.

What the Stanford Researchers Actually Tested

The study, “Hallucination-Free? Assessing the Reliability of Leading AI Legal Research Tools,” is one of the first rigorous, preregistered evaluations of commercial legal AI systems.

Rather than relying on anecdotes or isolated failures, the researchers constructed a dataset of more than 200 legal queries designed to mimic real-world usage. These included straightforward doctrinal questions, complex or evolving legal issues, factual lookups, and questions built on false assumptions.

The goal was simple but important: not just whether the tools could produce answers, but whether those answers were both correct and properly grounded in legal authority.

The Promise of RAG—and Why It Falls Short

Much of the marketing around legal AI focuses on retrieval-augmented generation (RAG). In theory, RAG systems reduce hallucinations by pulling relevant legal documents first and then generating answers based on those sources. The Stanford paper tests that claim seriously.

What it finds is that legal reasoning doesn’t map neatly onto document retrieval. Law isn’t just a collection of static facts; it’s an evolving system of interpretations, precedents, and jurisdictional nuances. Even identifying the “right” sources can require legal judgment.

As the researchers note, errors can creep in at multiple points: the system may retrieve the wrong documents, misinterpret the right ones, or apply them incorrectly.

How “Hallucination-Free” Are These Tools?

This is where the study directly challenges industry claims.

While the tested tools performed better than general-purpose models like GPT-4, they still produced hallucinations—false or misleading outputs—at meaningful rates. In some cases, these systems hallucinated more than 17% of the time, depending on how errors were measured.

That’s not a marginal issue. In legal practice, even a small error rate can have serious consequences.

The study also found significant differences between systems. Some tools prioritized answering questions (and made more mistakes), while others were more conservative, declining to answer a large share of queries but producing fewer incorrect responses.

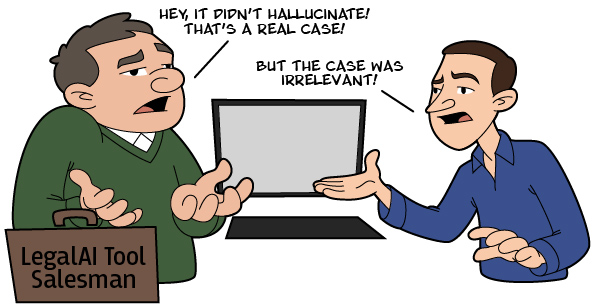

The More Subtle Problem: Misleading but Plausible Answers

One of the most valuable contributions of the paper is how it reframes “hallucinations.”

Not all errors are obvious fabrications. In fact, some of the most concerning failures involve answers that look credible—because they cite real cases—but are actually misgrounded. That is, the cited authority doesn’t support the proposition being claimed.

This kind of mistake is especially dangerous. A fabricated case can be spotted; a misapplied real case often cannot, at least not without careful review.

When AI Accepts False Premises

Another striking finding comes from how these systems handle incorrect assumptions.

When asked questions built on false premises—like misstatements of case outcomes or legal doctrine—AI tools often accept the premise and generate answers around it, rather than correcting the user.

This mirrors earlier research on general-purpose models and highlights a persistent issue: these systems tend to be cooperative rather than adversarial, even when accuracy demands pushback.

What the Study Means for Lawyers

The takeaway from the Stanford study is not that legal AI is useless—far from it. These tools clearly offer efficiency gains and can outperform general-purpose models in many contexts.

But they are not, despite marketing claims, hallucination-free.

The authors emphasize that lawyers remain responsible for verifying outputs, checking citations, and exercising independent judgment. In fact, the need for verification may offset some of the promised efficiency gains, since each claim and citation may require confirmation.

The Bottom Line: Don’t Rely on the Marketing

If there’s one takeaway worth emphasizing, it’s this: the gap between marketing claims and empirical evidence is real.

The Stanford paper doesn’t argue against using AI in legal research. Instead, it situates these tools where they belong—as useful starting points, not authoritative endpoints.

If you’re working in or around the legal field, it’s worth reading the original study in full. Not because it settles the debate, but because it grounds that debate in actual data rather than assumptions.

And in a domain like law, that distinction matters.