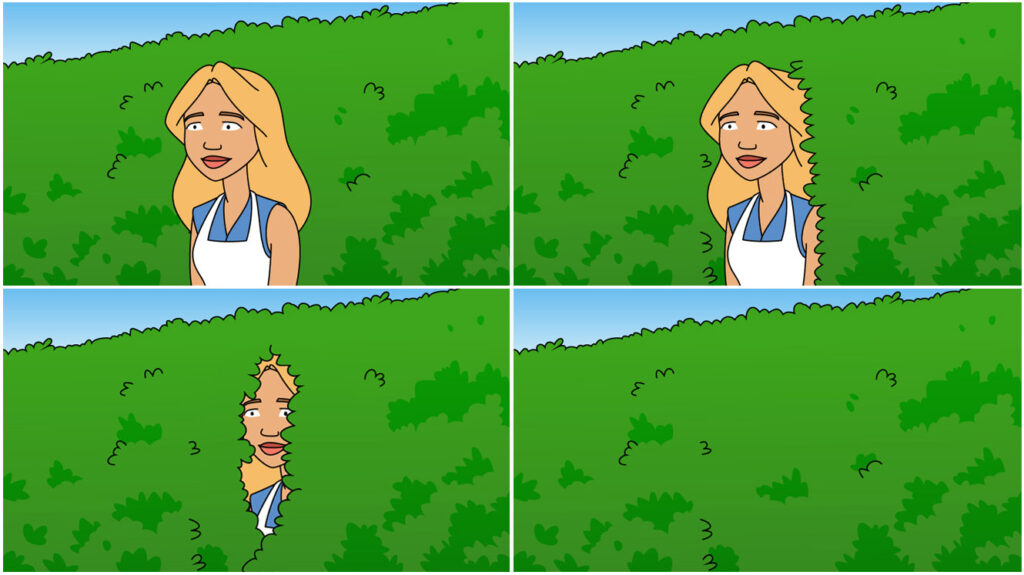

The Quiet Retreat of Alice and § 101

The Alice character from our Patent Beast Alice v. CLS series retreating into the bushes ala Homer Simpson.

The Collapse of Utility Rejections Under § 101

Dennis Crouch, in his excellent blog, Patently-O, has written an interesting article that examines a handful of unusual patent applications rejected for lack of “utility” under 35 U.S.C. § 101, including claims involving time travel, cold fusion, black-hole energy, melanin-based glucose synthesis, and room-temperature superconductors. It argues that although utility rejections are now rare, they remain important for filtering out scientifically implausible or insufficiently demonstrated inventions and for preventing speculative “paper patents” from distorting innovation incentives and the public record.

How Alice Changed Modern Patent Examination

I think the dramatic drop in §101 rejections is the result of several converging realities.

First, the USPTO has largely internalized the backlash against overaggressive Alice examination. The 2019 PEG guidance under Director Iancu fundamentally changed examiner behavior, and the Office never really went back. Examiners learned—both institutionally and practically—that broad “this is abstract” rejections were getting reversed at the PTAB with increasing frequency.

Why Patent Drafting Strategy Matters More Than Ever

Second, there has been a quiet but important maturation in drafting practice among prosecutors. Patent attorneys adapted. Early post-Alice applications often looked like pre-Alice business method filings with “on a computer” language sprinkled in. Today, most experienced prosecutors know they must:

- anchor claims in system architecture,

- emphasize data transformation,

- identify concrete technical problems,

- describe operational constraints,

- avoid result-oriented functional language,

- and tie the invention to machine behavior or system performance.

That evolution matters enormously.

Abstract Ideas vs. Technological Implementations

In my view, courts and examiners are not actually hostile to software. They are hostile to claims that appear untethered from engineering reality.

When software claims read like:

- “receive information,”

- “analyze information,”

- “determine a result,”

- “display output,”

with no meaningful technical implementation details, the claims start looking like disembodied logic or business objectives.

But when claims instead recite:

- specific processing architectures,

- synchronization mechanisms,

- distributed processing,

- latency reduction,

- memory management,

- protocol handling,

- resource allocation,

- data structure improvements,

- or concrete control flows,

they suddenly begin to look much more like traditional engineering inventions. That is why some applications get through and some do not.

Solving Technological Problems in Technological Ways

The real dividing line is often whether the claims appear to solve a technological problem in a technological way.

The irony, of course, is that this distinction is not actually found in the statutory text of 35 U.S.C. § 101. It is a judicially created filtering doctrine that has drifted toward a kind of quasi-obviousness or “smell test” analysis. Even the recent criticism from groups like American Intellectual Property Law Association reflects growing discomfort that Alice step two effectively resurrected the old pre-1952 “inventive concept” requirement.

ColdFusion, Software Patents, and Concrete System Claims

The article’s ColdFusion observation is especially interesting because many ColdFusion-based inventions involve workflow orchestration, server-side processing, database interaction, and dynamic application behavior. Those technologies can absolutely survive §101 when claimed as concrete computing systems rather than abstract automation concepts.

I suspect many successful applications in that space succeed because:

- the claims stay grounded in system mechanics,

- the specification explains technical implementation details,

- and the drafting avoids claiming the business result itself.

In other words, they claim how the system achieves the result, not merely the desired outcome.

The Quiet Retreat of Aggressive § 101 Enforcement

I also think examiners increasingly recognize an uncomfortable practical reality: modern computing innovation is largely software innovation. If §101 were applied in its harshest theoretical form, enormous portions of contemporary technology would become effectively unpatentable. The system has quietly adjusted to avoid that outcome.

So although Alice formally remains intact, operationally we may be watching §101 slowly retreat back toward a narrower gatekeeping function rather than the broad invalidation weapon it became from roughly 2014–2021.

For related articles concerning sec 101 issues: The Harsh Reality of Sec 101 Appeals